Cookies Notice

This site uses cookies to deliver services and to analyze traffic.

📣 Guardian Agent: Guard AI-generated code

The narrative often sold by AI adoption is one of efficiency. The actual data points to one of sprawl.

We are in the midst of a reckoning with the benefits and costs of AI coding assistants.

Data clearly points to valuable boosts in productivity and skill: A study from Anthropic highlighted a 50% increase in engineer productivity using Claude, and a research paper from the University of Chicago pointed to a 39% increase in code merges post-Cursor adoption.

But the security risks of AI are becoming more apparent as usage increases. Our own Apiiro research reveals 10x more security findings in code shipped by AI-assisted teams.

The speed of AI code adoption makes cost/benefits analyses challenging; organizations and development teams are ramping up usage faster than they can measure security impact.

The question of AI-assisted code is no longer one of if, but how. Still, security leaders need trustworthy data to make an informed decision about how to promote risk-aware adoption.

Apiiro’s latest research into Open Source Software Sprawl (OSS) and API attack surface growth delivers an in-depth look at the impact of explosive code growth on software architecture.

Leading Agentic Application Security platform Apiiro conducted an analysis of thousands of code repositories and developers at a Fortune 500 enterprise, over a period of two and a half years, to determine the impact of AI code assistants on productivity and risk. The results point to a marked risk premium of AI coding assistants: AI coding assistants deliver real productivity gains, but expand the attack surface faster than security teams can manually review.

The following research was conducted on a Fortune 500 Apiiro customer, analyzing how adoption of AI coding assistants (specifically GitHub Copilot) correlates with the expansion of the enterprise attack surface. Data analysis of our APO and OSS inventory reveals a critical side effect: the organizational attack surface is expanding faster than security teams can manage.

The research includes analysis of OSS dependency inventories, API endpoint catalogs, and developer behavior data across 26.5K repositories, 14K developers, 4.7M OSS package records, and nearly 80,000 APIs in code.

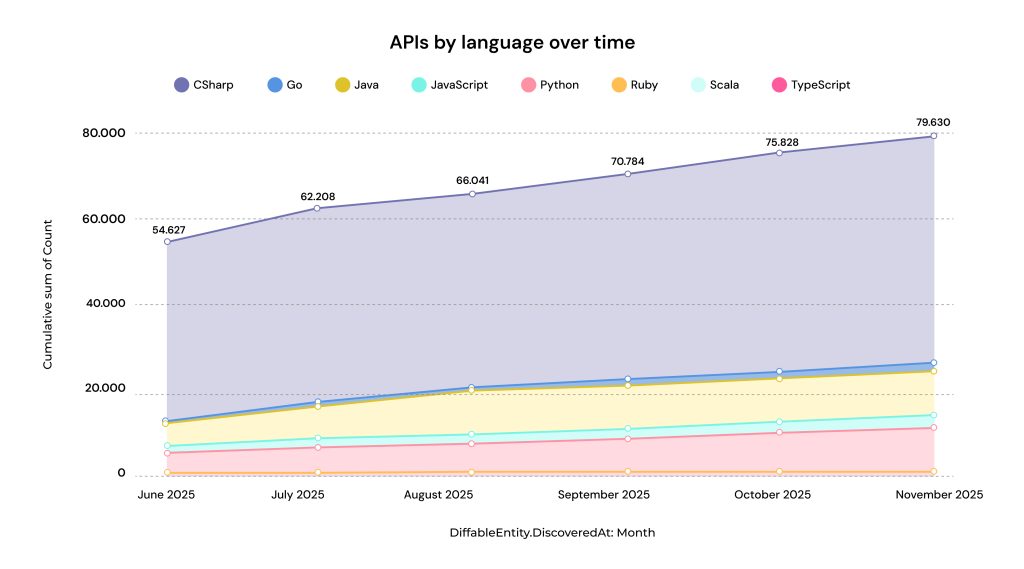

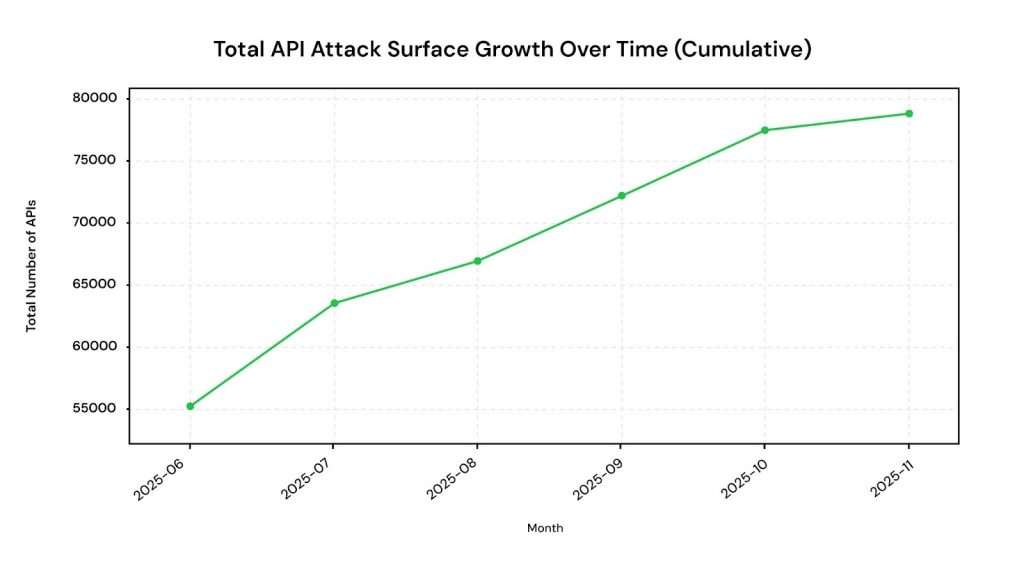

Between June and October 2025, API surface records grew from 54,627 to 79,630 (+44%), representing 53,598 unique API definitions in code across 2,033 repositories. Of these, 72.5% are internal controller actions while 27.5% (14,743) are REST-style HTTP endpoints. This represents a roughly 40% increase in the attack surface in under six months.

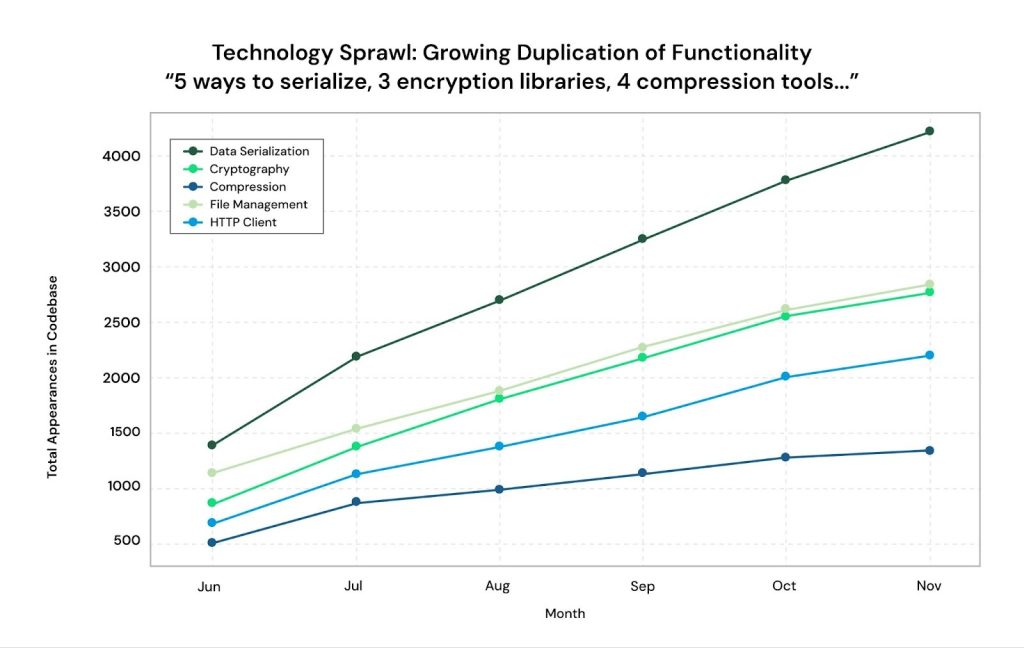

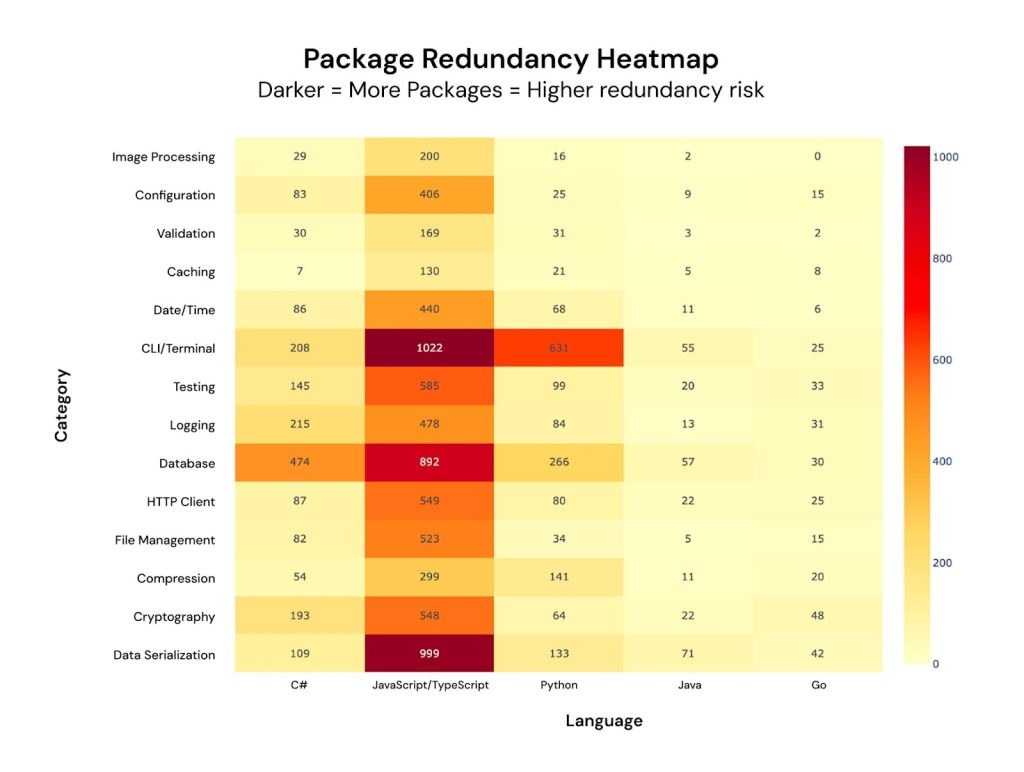

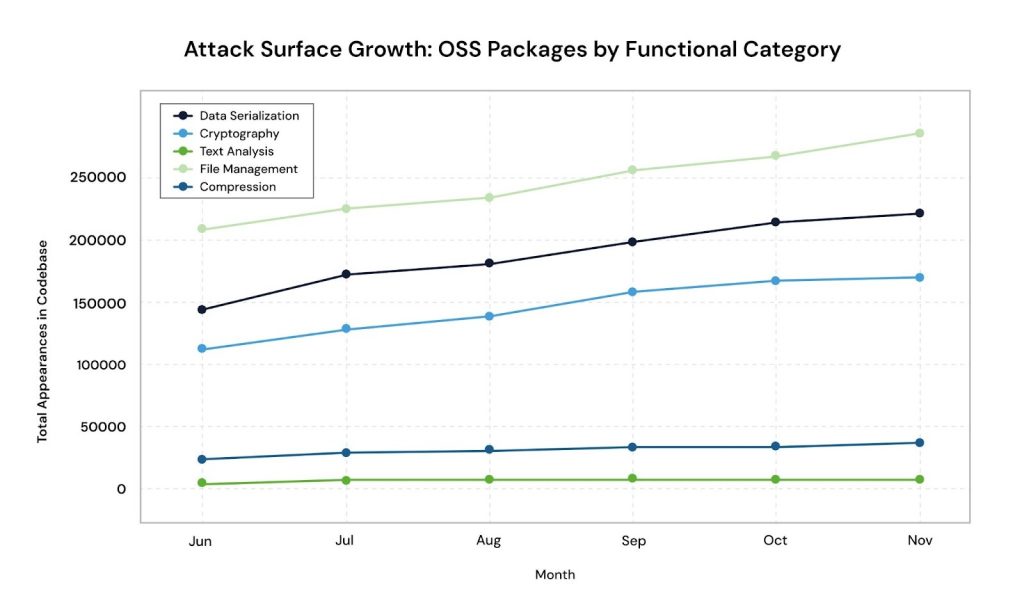

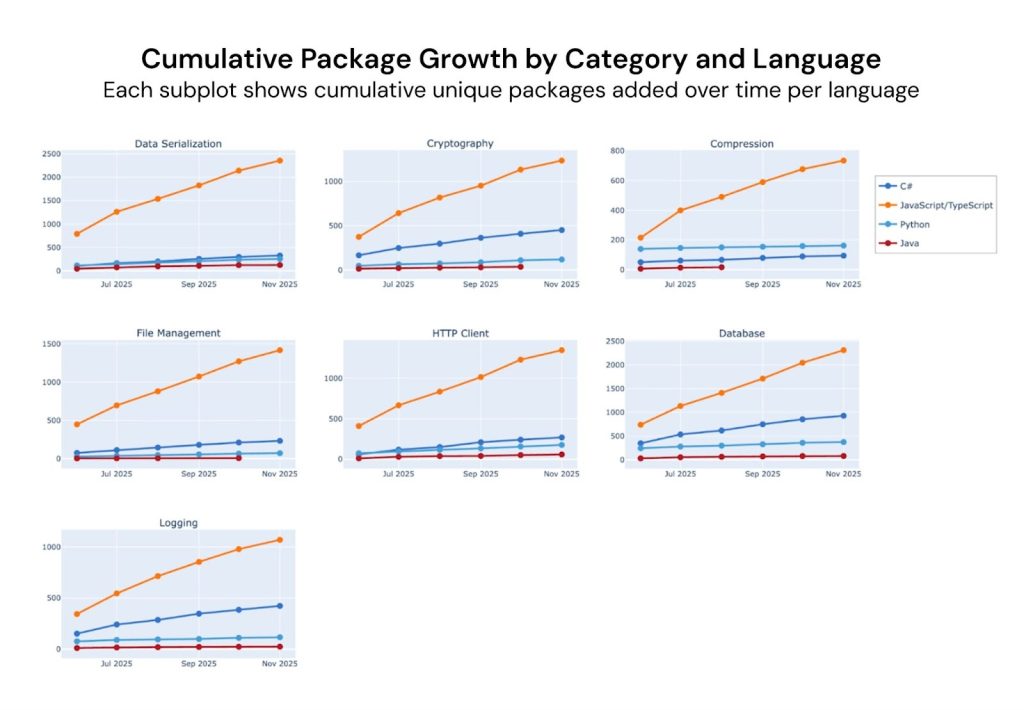

This analysis of 4.7 million OSS package records across 9,352 repositories reveals a concerning trend: organizations are experiencing significant package sprawl, where multiple packages perform the same function and dramatically expand the attack surface.

Across major functional categories such as database, data serialization, logging, testing, cryptography, HTTP clients, and compression, organizations are using hundreds to thousands of different packages for the same purpose.

This leads to more attack vectors, more components to patch, a higher risk of vulnerable dependencies, and greater complexity during security audits.

The data indicates continuous growth in package usage, with the fastest acceleration in:

These trends demonstrate a steadily expanding attack surface across the entire organization.

AI coding assistants prioritize paths of least resistance when fulfilling prompts. This leads to unchecked proliferation of packages, and suggestions of unapproved or disused dependencies.

The result is three different HTTP libraries, creating three attack vectors and three times the remediation work.

| Metric | Copilot Repos | Non-Copilot Repos | Ratio |

| Average commits per repo | 349 | 195 | 1.79x |

| Average developers per repo | 6.6 | 2.7 | 2.46x |

| Average OSS packages per repo | 832 | 397 | 2.09x |

| OSS packages per commit | 4.16 | 2.91 | 1.43x |

| Metric | Finding | Security Implication |

| Commits per session | +18% higher with Copilot | More code = more attack surface |

| Top improvers | Up to 7x productivity gains | Faster code generation = faster sprawl |

| Developer improvement rate | 44% showed gains after Copilot | Widespread acceleration of code output |

AI coding assistants deliver real productivity gains – 44% of developers showing measurable improvement, with top performers achieving up to 7x productivity multipliers.

However, this velocity comes at a cost.

| Risk Indicator | Copilot Repos | Non-Copilot Repos | Premium |

| OSS per commit | 4.16 | 2.91 | +43% |

| High-OSS likelihood | 32.5% | 16.1% | +102% |

| Avg packages per repo | 832 | 397 | +110% |

Copilot-active repositories show:

In summary, between June 2023 and November 2025

In an AI-driven environment, you cannot secure what you cannot see. The sheer speed of code generation requires Application Security Posture Management (ASPM) to provide a real-time map of your environment.

The best time to stop sprawl is before the developer hits “Merge.” This requires moving security feedback into the “Developer Moment.”

When AI generates code at 7x speed, security teams cannot manually triage results. You must fight AI with AI.

The research shows that developers using AI often feel less responsible for the code they “co-author.”

We cannot put the AI genie back in the bottle – nor, as the data shows, should we want to try – the enormous productivity boosts enabled by AI-assisted development are here to stay.

But so are the risks, unless security leaders take steps to mitigate them.

The future of securing AI code is all about context, resilience, and accountability. Only by giving security teams the safeguards and training they need to deploy AI-assisted code responsibly, can we maximize the benefits while minimizing attack surface growth, and preventing risk.

Trusted by hundreds of global enterprises – including Shell, USAA, and BlackRock – and recognized by Gartner, IDC, and F&S as an ASPM leader, Apiiro is the Agentic Application Security platform built for the AI era.

Schedule a demo to see our continuous, risk-aware approach to securing AI-assisted code.